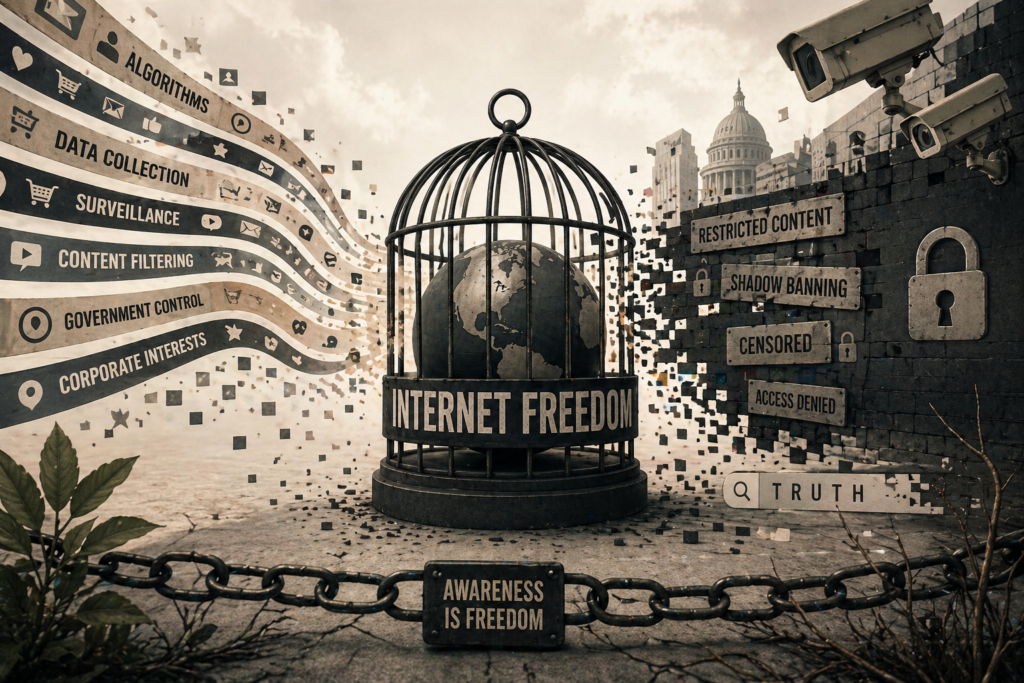

Internet Freedom in 2026: The Illusion of an Open World

The internet was supposed to be humanity’s greatest equalizer.

A place where information flows freely. Where anyone can speak. Where truth competes openly with lies — and wins.

But that version of the internet no longer exists.

What we have today is something far more complex. A system shaped not just by technology, but by power, incentives, and invisible layers of control that most users never see.

And the most dangerous part?

You don’t feel controlled.

You feel informed.

You feel connected.

You feel free.

That is what makes it work.

The Illusion of Choice

Every time you open a browser, search for something, or scroll through your feed, you believe you are navigating the internet on your own terms.

You choose what to click. What to read. What to believe.

But that sense of choice is carefully constructed.

Because before you ever see a piece of content, it has already passed through multiple layers of filtering, ranking, and prioritization.

Not randomly. Not neutrally.

But according to systems designed to decide what is most relevant, most engaging — and most valuable.

Google’s own documentation explains that search results are ranked based on hundreds of signals, including user behavior, authority, and context:

https://www.google.com/search/howsearchworks/

What it doesn’t fully explain is how those signals interact — and how they shape the reality each user experiences.

Because two people searching the same thing rarely see the same results.

That is not a bug.

It is the system working as intended.

From Open Web to Controlled Ecosystem

To understand what has changed, you need to understand what the internet used to be.

In its early years, the web was decentralized. Messy. Unfiltered.

There were no dominant platforms controlling distribution. No global algorithms deciding visibility. No centralized systems optimizing what people should see.

Information existed — and you had to find it.

Today, the opposite is true.

Information finds you.

And what finds you is not everything.

It is what platforms decide to show.

Large technology companies have effectively become the new gatekeepers of information.

Platforms like Google, Meta, and TikTok are no longer just tools. They are environments — ecosystems where visibility is controlled through complex ranking systems.

According to the Pew Research Center, a majority of users now rely on platforms — not direct websites — as their primary source of information.

This shift changes everything.

Because when platforms control access, they control exposure.

And when they control exposure…

They shape perception.

Algorithms: The Invisible Editors

In traditional media, editors decided what stories made the front page.

Today, algorithms do the same thing — at a scale no human system could ever achieve.

Every platform uses ranking systems to decide:

- What content appears first

- What content gets recommended

- What content disappears into obscurity

These systems are designed to maximize engagement.

Not truth. Not balance. Not fairness.

Engagement.

Research published in Science found that emotionally charged and sensational content spreads significantly faster than factual information on social media.

This creates a powerful distortion:

The internet does not show what is most accurate.

It shows what keeps people watching.

And over time, that changes how people think.

The Rise of Personalized Reality

One of the most significant transformations of the modern internet is personalization.

Every major platform tailors content based on:

- Your search history

- Your clicks

- Your location

- Your interests

- Your behavior patterns

This creates a feedback loop.

You click → the system learns → it shows you more of the same.

Over time, your digital environment becomes increasingly narrow.

More aligned with what you already believe.

Less exposed to opposing perspectives.

This phenomenon is known as the filter bubble, a term popularized by Eli Pariser.

Academic research from institutions like Oxford University has shown that algorithmic filtering contributes to ideological isolation and polarization.

But the real issue is deeper.

You don’t just see less of the outside world.

You start believing your version of the world is the only one that exists.

Control Without Force

Traditional censorship is visible.

A website is blocked. A post is deleted. A voice is silenced.

Modern control is different.

It doesn’t remove content.

It simply makes sure you never see it.

Content can be:

- Downranked (pushed lower in results)

- Suppressed (shown to fewer people)

- Demonetized (financially discouraged)

- Shadow-limited (restricted without notification)

These mechanisms are increasingly discussed in investigative reporting by outlets such as:

They represent a shift from hard censorship to soft control.

No bans. No warnings.

Just silence.

And silence is harder to detect.

The Core Problem

The problem is not that the internet is controlled.

The problem is that the control is largely invisible.

Users still feel autonomous.

They still believe they are exploring freely.

But in reality, they are navigating a space shaped by systems they do not understand — and cannot see.

This creates a fundamental shift in what internet freedom actually means.

It is no longer about access.

It is about awareness.

Because in a system where everything is technically available…

What matters most is what becomes visible.

Government Power: Control Without Blocking

When people think about restricted internet freedom, they often imagine countries where websites are openly blocked.

China. Iran. North Korea.

But modern control in democratic systems works differently.

It is not based on blocking access.

It is based on influencing visibility.

This shift is critical.

Because when something is blocked, people notice.

But when something is quietly pushed out of reach…

most people never realize it existed in the first place.

Regulation as a Tool of Control

Governments today rarely censor directly. Instead, they regulate platforms.

This creates a layer of indirect control that is harder to challenge — and easier to justify.

One of the most significant examples is the European Union’s Digital Services Act (DSA):

https://digital-strategy.ec.europa.eu/en/policies/digital-services-act-package

The DSA requires platforms to:

- Remove illegal content quickly

- Limit harmful or misleading information

- Assess systemic risks

- Increase transparency in moderation

On paper, this protects users.

In practice, it introduces a powerful dynamic:

Platforms become responsible for deciding what is acceptable.

And when companies face legal pressure, they tend to over-correct.

They remove more.

They suppress more.

They take fewer risks.

Because the cost of allowing controversial content is higher than the cost of hiding it.

This creates a chilling effect — not through bans, but through caution.

The Blurred Line Between Safety and Control

The internet is not a simple environment.

There is real harm online:

- Disinformation

- Extremism

- Harassment

Regulation exists for a reason.

But the challenge is this:

Who decides where protection ends and control begins?

Because every system that removes harmful content must also define what “harmful” means.

And definitions are never neutral.

They are shaped by:

- Political priorities

- Cultural context

- Institutional incentives

This creates a fundamental tension at the heart of internet freedom.

More control can make the internet safer.

But it can also make it narrower.

Surveillance: The Price of Participation

Freedom is not just about what you see.

It is also about what is known about you.

And in 2026, the answer is simple:

Almost everything.

Modern internet infrastructure is built on data collection.

Every action leaves a trace:

- Search queries

- Clicks

- Scroll behavior

- Location data

- Device information

Organizations like the Electronic Frontier Foundation have repeatedly warned that users are subject to continuous tracking — often without meaningful awareness.

This data is not only used for advertising.

It is used to predict behavior.

To optimize engagement.

To shape decision-making environments.

And increasingly, to inform policy and enforcement.

The Data Economy: Why Control Exists

To understand why the internet looks the way it does, you need to understand its business model.

The internet is not funded by access.

It is funded by attention.

And attention is monetized through data.

Companies collect data to:

- Target advertising

- Improve algorithms

- Increase engagement

This creates a system where:

The more precisely a platform can predict you, the more valuable you become.

But prediction requires data.

And data requires surveillance.

This is not hidden.

It is openly described in corporate reports, regulatory filings, and academic research.

Yet most users never fully grasp the scale.

Because data collection is not experienced as control.

It is experienced as convenience.

Personalized recommendations.

Relevant content.

Seamless experiences.

And that is exactly why it works.

Case Study: How Visibility Is Quietly Shaped

Let’s break this down into a real scenario.

A journalist publishes an article challenging a dominant narrative.

The article is not removed.

It is not banned.

But:

- It ranks lower in search results

- It is not recommended by platforms

- It reaches fewer users organically

Meanwhile, other content — aligned with dominant trends — is amplified.

No explicit censorship occurs.

But the outcome is the same.

One version of reality becomes visible.

Another fades away.

This dynamic has been explored in multiple academic and journalistic investigations, including work referenced by:

These studies highlight a key point:

Modern information control is not about deletion.

It is about distribution.

The System Nobody Sees

The modern internet operates through layers:

- Infrastructure (networks, servers)

- Platforms (search engines, social media)

- Algorithms (ranking, recommendation)

- Policies (rules, moderation systems)

Each layer introduces its own form of influence.

Individually, they seem manageable.

Together, they create a system that shapes information flow at a global scale.

And yet, for the average user, this system is invisible.

No dashboard shows it.

No interface explains it.

No warning appears.

Which leads to the most important realization:

The greatest limitation of internet freedom today is not restriction.

It is lack of awareness.

The Future of Internet Freedom: Where This Is Going

At this point, the question is no longer whether the internet is controlled.

It is.

The real question is:

What happens next?

Because the systems shaping what people see online are not slowing down.

They are becoming more advanced, more precise, and more deeply integrated into everyday life.

Artificial intelligence is now embedded into search engines, recommendation systems, moderation tools, and even content creation itself.

This changes the nature of control completely.

Instead of simply ranking or filtering information…

systems are starting to generate it.

And when systems generate content, the line between reality and constructed information becomes even harder to detect.

AI and the Next Layer of Influence

AI-driven systems are designed to predict what users want — often before they even express it.

They analyze patterns across billions of interactions and use that data to shape what appears in front of you.

This includes:

- Search summaries

- Recommended articles

- Video suggestions

- Automated answers

Major platforms openly acknowledge the growing role of AI in content delivery:

This introduces a critical shift:

You are no longer just choosing information.

You are consuming interpretations of information created by systems.

And those interpretations are influenced by:

- Training data

- Optimization goals

- Platform incentives

Which means:

The system doesn’t just show reality anymore.

It helps construct it.

The Fragmentation of Truth

One of the most significant consequences of this system is the fragmentation of truth.

Different users experience different versions of the internet.

Different search results.

Different news exposure.

Different narratives.

Over time, this leads to a world where:

- People no longer agree on basic facts

- Public discourse becomes polarized

- Shared reality weakens

This issue has been extensively studied by institutions such as:

Reuters Institute for the Study of Journalism

The conclusion is consistent:

The structure of the modern internet amplifies division.

Not necessarily by intention.

But by design.

Can Internet Freedom Be Restored?

There is no simple solution.

Because the current system is not broken.

It is working exactly as designed.

Platforms maximize engagement.

Governments manage risk.

Users seek convenience.

And all of these forces reinforce each other.

However, that does not mean nothing can change.

There are three areas where the future of internet freedom will be decided:

1. Transparency

Users are beginning to demand more visibility into how systems work.

Transparency reports from companies like Google and Meta are a step forward, but they remain limited.

True transparency would require:

- Clear explanation of ranking systems

- Insight into content suppression mechanisms

- Independent audits of algorithms

Without this, control remains hidden.

2. Regulation

Regulation will continue to expand.

The key challenge will be balance.

Too little regulation allows harmful content to spread.

Too much regulation concentrates power.

And concentrated power always risks misuse.

3. User Awareness

This is the most underestimated factor.

Because systems rely on users not understanding how they work.

The moment users become aware of:

- Algorithmic filtering

- Data collection practices

- Content prioritization

The system becomes less effective at shaping perception.

Awareness does not remove control.

But it reduces its influence.

The Final Reality

The internet is not free in the way most people imagine.

It is accessible.

It is powerful.

It is global.

But it is also structured, filtered, and influenced at every level.

And yet, there is still something important to understand:

Freedom has not disappeared.

It has changed form.

It is no longer about unlimited access.

It is about the ability to recognize how the system works — and to navigate it consciously.

Because once you see the patterns…

You stop confusing visibility with truth.

You stop assuming neutrality.

You start questioning what appears in front of you.

And that is where real internet freedom begins.

One Question to Remember

Next time you search, scroll, or click, ask yourself one simple question:

Why am I seeing this — and what am I not seeing?

Because the answer to that question is the difference between passive consumption…

and true awareness.