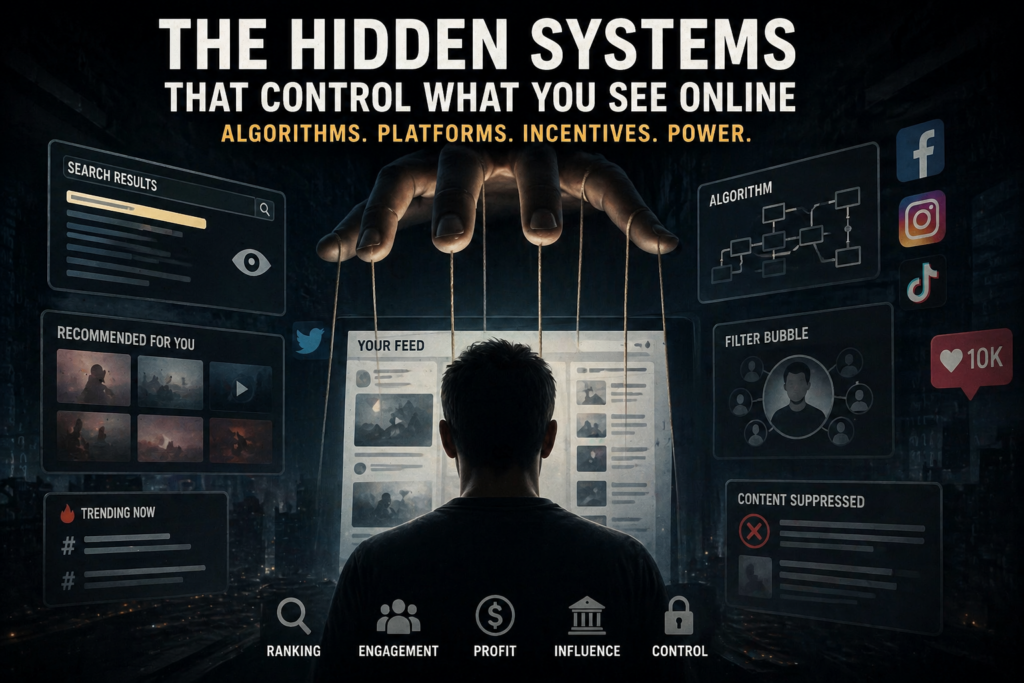

The Hidden Systems That Control What You See Online

Every time you open a website, search for information, or scroll through a social media feed, it feels like you are freely exploring the internet.

But in reality, you are rarely seeing the internet itself.

You are seeing a selected version of it — ranked, filtered, recommended, suppressed, personalized, monetized, and shaped before it reaches your screen.

This does not mean that every platform is secretly controlled by one person, one government, or one company. The truth is more complex — and in many ways more important. What you see online is shaped by systems: search ranking systems, recommendation algorithms, moderation rules, advertising incentives, government requests, legal pressure, corporate policies, and increasingly, artificial intelligence.

These systems are often invisible to ordinary users. You do not see the thousands of pages that never appear in search results. You do not see the posts that were downranked before they reached your feed. You do not see the videos that were not recommended, the stories that were demonetized, the accounts that were restricted, or the search results that changed because of location, language, ranking signals, or policy decisions.

That is why the modern internet can feel open while still being deeply controlled.

The question is no longer whether information exists online. In most cases, it does. The real question is whether people can find it, whether platforms decide to show it, whether algorithms amplify it, and whether governments or corporations have incentives to make it disappear from view.

The Internet Did Not Disappear. Visibility Did.

The old idea of the internet was simple: publish something online, and the world could access it. In theory, anyone with a website could reach anyone else. A small blog could compete with a newspaper. A researcher could publish independently. A whistleblower could share evidence. A citizen could document abuse. A journalist could bypass traditional gatekeepers.

That idea still exists — but only partially.

Today, publishing something online is not the same as being visible. A page can exist and still be functionally invisible. A video can be uploaded and never recommended. A post can remain online but be pushed so far down in a feed that almost nobody sees it. A website can be indexed by search engines but appear so low in results that it effectively does not exist for ordinary users.

This is one of the most important changes in the digital world:

Control no longer has to mean deleting information. It can simply mean reducing its visibility.

That is a quieter form of control. It is harder to prove, harder to notice, and harder to challenge. When a website is blocked, people can see that access has been denied. But when an article is downranked, a post is buried, or a topic quietly disappears from recommendation systems, most users never know that anything happened.

This is why visibility is power.

Search Engines Are Not Neutral Windows Into Reality

For many people, Google is the front door of the internet. If something appears at the top of search results, it feels authoritative. If something is hard to find, people often assume it is unimportant, unreliable, or does not exist.

But search results are not a neutral list of everything online. They are the product of automated systems that crawl, index, evaluate, and rank pages. Google’s own documentation explains that Search works in stages: pages must be discovered, crawled, indexed, and then served in response to queries. Google also says that not every page makes it through every stage.

That matters because every stage creates a filter.

- If a page is not crawled, it may never enter the system.

- If a page is not indexed, it cannot appear in search results.

- If a page is indexed but ranked poorly, most people will never see it.

- If a page is technically available but buried beneath stronger domains, it becomes practically invisible.

Google also states that its ranking systems use a variety of signals and systems to understand how to rank pages. That is not inherently wrong. Search engines need ranking systems. Without them, the internet would be unusable. But it means that search is not simply “show me the web.” It is “show me the web according to a ranking system.”

That distinction is critical.

Search rankings shape what people believe is important. They influence what journalists discover, what students cite, what voters read, what consumers buy, and what narratives become dominant. A search engine does not need to ban a perspective to reduce its influence. It only needs to rank other perspectives above it.

This is why information control in the modern era is often not about total censorship. It is about hierarchy.

Recommendation Systems Changed the Internet Even More

Search engines changed how people find information. Recommendation systems changed what people consume without searching at all.

Social media feeds, video platforms, short-form video apps, and news aggregators all rely on recommendation systems. These systems decide what to place in front of users next. They decide which video follows another video, which post appears in the feed, which topic trends, which creator grows, and which conversation becomes visible.

Unlike search, recommendation systems do not wait for a user to ask a question. They predict what the user might engage with and deliver it automatically.

That makes them extremely powerful.

If search engines shape discovery, recommendation systems shape attention.

And attention is the currency of the internet.

Most platforms are not primarily designed to give users the most balanced view of reality. They are designed to keep users engaged. That means recommendation systems often prioritize content that produces reactions: anger, fear, excitement, outrage, desire, identity, conflict, or belonging.

This does not always mean the content is false. Sometimes it is true. Sometimes it is half true. Sometimes it is misleading. Sometimes it is simply emotionally powerful. The system does not necessarily understand truth in the human sense. It understands signals.

Did people click?

Did they watch?

Did they share?

Did they comment?

Did they stay longer?

Those signals become feedback. The feedback trains the system. The system changes what other people see.

Over time, the platform does not merely reflect public attention. It manufactures it.

The Facebook Papers Showed the Problem Was Not Imaginary

One of the clearest real-world examples came from Facebook’s internal documents, widely reported as the Facebook Files or Facebook Papers. The Wall Street Journal reviewed internal Facebook materials and reported that the company’s own research raised concerns about how its systems affected users and public discourse.

The key lesson was not simply that one company made mistakes. The larger lesson was that platforms can understand serious harms inside their systems while still being pulled by incentives that reward engagement and growth.

That is the central conflict of the modern internet:

What is good for engagement is not always good for society.

A platform may say it wants meaningful connection, reliable information, and safer discourse. But if its business model rewards time spent, reactions, and repeated use, then the system has a constant incentive to amplify content that keeps people emotionally involved.

This is not a small design issue. It is structural.

When billions of users depend on a few platforms to understand the world, the internal choices of those platforms become public infrastructure. Yet those choices are often made inside private companies, protected by trade secrets, explained only in broad language, and visible to outsiders mostly through leaks, whistleblowers, lawsuits, academic research, or transparency reports.

YouTube and the Power of Suggested Reality

YouTube provides another important case study. The platform is not only a place where people search for videos. It is also a recommendation engine. For many users, the most important part of YouTube is not the search bar — it is the next suggested video.

Data & Society’s 2018 report “Alternative Influence” examined a network of political influencers on YouTube and described how creators used the platform to build audiences and spread ideology. The report did not argue that one algorithm alone explains everything. But it highlighted something broader: recommendation-driven media environments can help connect audiences, personalities, and ideas in ways that shape political and cultural understanding.

This matters because users often experience recommendations as natural. They click one video, then another, then another. The path feels personal. But the path is also designed.

And design has consequences.

When a platform chooses what to recommend, it is not merely helping users find content. It is creating pathways of attention. Those pathways can educate, entertain, radicalize, distract, inform, or mislead. The same system that helps someone find a useful tutorial can also push another person into increasingly narrow or extreme information environments.

The issue is not that recommendation systems are always malicious. The issue is that they are powerful, opaque, and optimized for goals that may not align with truth, balance, or public interest.

Government Pressure Is Another Layer of Control

Platforms and algorithms are only one part of the story. Governments also influence what people can see online.

Sometimes this happens openly through laws, court orders, website blocking, or direct censorship. Sometimes it happens through softer pressure: regulatory threats, informal requests, national security claims, election rules, platform liability, or demands to remove content that violates local law.

Google’s Transparency Report includes a dedicated section for government requests to remove content. Google explains that governments may request removal of information from Google products such as YouTube videos or blog posts, and that requests can come through court orders or government agencies.

Again, not every request is illegitimate. Some content is illegal. Some content is harmful. Some removals may be justified. But the existence of formal removal systems shows that the online information environment is not simply governed by users and platforms. States are also involved.

Freedom House’s Freedom on the Net project has documented a long-term decline in global internet freedom. In its 2024 reporting, Freedom House stated that global internet freedom declined for the 14th consecutive year. That finding matters because it shows that the pressure on online freedom is not a fringe concern. It is a global trend documented by a major human rights organization.

The internet is not moving automatically toward openness. In many places, it is moving toward greater control.

Why This Is Not Just “Censorship” in the Old Sense

When people hear the word censorship, they often imagine a government banning a newspaper, blocking a website, or arresting a writer. Those things still happen. But the modern system is broader and more complicated.

Today, control can happen through:

- Search ranking

- Recommendation systems

- Platform moderation

- Advertising rules

- Demonetization

- Government removal requests

- Copyright claims

- App store policies

- Payment processor restrictions

- AI-generated summaries that replace source discovery

This means a voice does not need to be officially banned to lose reach. It can be made harder to find, harder to fund, harder to share, harder to recommend, or harder to trust.

That is why the phrase “information control” is more accurate than censorship alone.

Censorship is one form of control. But control also includes ranking, amplification, suppression, recommendation, monetization, and access.

The hidden systems that shape what you see online are not one machine. They are a stack of machines, rules, incentives, and decisions layered on top of one another.

The Invisible Hand of Money: Advertising Drives Everything

If you want to understand why the internet looks the way it does today, you need to follow one thing: money.

Most major platforms — Google, Facebook, YouTube, TikTok, X (Twitter) — are not built primarily as information platforms.

They are advertising platforms.

Google itself generates the majority of its revenue from advertising. Meta (Facebook, Instagram) does the same. Their business model is simple:

- Capture attention

- Keep users engaged

- Sell that attention to advertisers

This creates a fundamental shift in how information is prioritized.

The goal is no longer simply to provide the most accurate or balanced information.

The goal is to maximize engagement, because engagement generates revenue.

And engagement does not always align with truth.

According to research published in Science (MIT study on misinformation), false information spreads faster and more widely than true information on social media.

Why?

Because it is often more novel, more emotional, and more engaging.

That creates a dangerous alignment:

The content that performs best is not always the content that is most accurate — it is the content that keeps people reacting.

—Demonetization: Control Without Deletion

There is another powerful tool that shapes what you see online: monetization.

Creators, journalists, and independent platforms often depend on revenue from ads, sponsorships, or platform monetization systems.

But those systems are controlled by the platforms themselves.

This means platforms do not need to remove content to influence it.

They can simply remove the financial incentive.

This is called demonetization.

A video can stay online but lose ad revenue. A creator can continue posting but earn almost nothing. A topic can become “less profitable” and gradually disappear because fewer creators are willing to cover it.

According to reporting by The Guardian, YouTube has repeatedly adjusted its monetization policies, affecting which types of content are considered advertiser-friendly.

These changes often impact entire categories of content.

This creates a subtle but powerful form of control:

- Content is not banned

- But it becomes financially unsustainable

- And slowly disappears from visibility

From the outside, it looks like natural decline.

In reality, it is system-driven.

—Algorithmic Amplification: What Gets Bigger Gets Bigger

One of the most important properties of modern platforms is amplification.

Algorithms do not treat all content equally.

They amplify certain content.

That amplification creates exponential growth.

A post that performs well early gets pushed to more users. More users engage. That engagement signals the system to push it further.

This creates a feedback loop where:

- Popular content becomes more popular

- Engaging content becomes dominant

- Less engaging content disappears

This is not necessarily a conspiracy.

It is how optimization systems work.

But it has consequences.

It means that the internet is not a balanced representation of reality.

It is a distorted representation — shaped by what performs best within the system.

—Political Influence and Information Power

Where attention goes, power follows.

And where power exists, influence follows.

Governments, political groups, and state actors understand this.

According to reports from FBI and multiple academic studies, foreign and domestic actors have used digital platforms to influence public opinion.

This does not always mean direct censorship.

In many cases, influence works through amplification.

Instead of removing content, actors can:

- Promote specific narratives

- Exploit algorithmic systems

- Target specific audiences

- Use coordinated campaigns

The goal is not necessarily to convince everyone.

It is to shift perception.

Even small shifts in perception can have large consequences in elections, public debates, and societal trust.

—The Rise of AI as a New Layer of Control

The next layer is already here: artificial intelligence.

Search engines are integrating AI-generated answers.

Platforms are using AI to filter, rank, summarize, and even generate content.

This changes the system again.

Instead of showing users multiple sources, AI can generate a single summarized answer.

This reduces the need to explore different perspectives.

It centralizes interpretation.

Google itself has introduced AI Overviews in search, where answers are generated directly instead of linking users to multiple sources.

This creates a new kind of gatekeeping:

- Not just which sources are shown

- But how information is interpreted before the user even sees it

This is a massive shift.

For the first time, the system is not only ranking content.

It is actively shaping the narrative.

—Why Most People Never Notice

The most effective systems are the ones you do not see.

Most users believe they are simply browsing freely.

They do not see:

- The posts that were never shown

- The pages that were never indexed

- The videos that were never recommended

- The content that was quietly deprioritized

There is no alert saying: “This information was hidden from you.”

There is no message saying: “This topic was downranked.”

The system feels natural.

And that is why it works.

Control that is invisible is far more powerful than control that is obvious.

Real-World Consequences: This Is Already Happening

This is not a theoretical discussion about technology.

The effects of these systems are already shaping the real world.

They influence elections, public opinion, mental health, journalism, and even how people understand reality itself.

During major global events — elections, pandemics, wars — digital platforms become the primary source of information for millions of people.

But those people are not all seeing the same information.

They are seeing different versions of reality.

Filtered. Ranked. Personalized.

According to Pew Research Center, a large and growing portion of the population relies on digital platforms as a primary source of news.

This means that the systems controlling visibility are no longer just shaping entertainment.

They are shaping collective understanding.

—The Fragmentation of Reality

One of the most dangerous outcomes is fragmentation.

In the past, people disagreed — but they often shared the same basic set of facts.

Today, many people do not even share the same information space.

They live in parallel realities.

One group sees one version of events.

Another group sees something completely different.

Both believe they are informed.

Both believe the other side is misled.

This is not just a political issue.

It is a structural result of personalized information systems.

When algorithms continuously adapt to individual behavior, they stop showing diverse perspectives.

They show reinforcing ones.

This creates echo chambers.

And echo chambers create certainty.

Not truth — certainty.

—Trust Is Eroding — And That May Be the Biggest Risk

When people realize that information is filtered, ranked, and influenced, something deeper begins to break.

Trust.

Trust in media.

Trust in institutions.

Trust in platforms.

Trust in each other.

According to Edelman Trust Barometer, global trust in institutions has become increasingly fragile.

Part of this erosion is directly connected to how information is distributed online.

When people cannot agree on what is real, they cannot agree on what is true.

And when they cannot agree on truth, decision-making becomes unstable.

—The Illusion of Control vs. The Reality of Influence

Most users believe they are in control of what they consume.

They choose what to click.

They choose who to follow.

They choose what to watch.

But those choices are influenced by what is shown.

And what is shown is not neutral.

This creates an illusion of control.

You are not forced to see something.

But you are guided toward it.

Repeatedly.

Quietly.

At scale.

Over time, this guidance becomes invisible.

And what feels like personal choice becomes system-shaped behavior.

—What Can Be Done?

There is no simple solution.

The systems are complex.

The incentives are powerful.

The scale is massive.

But awareness is the first step.

Users can:

- Question what they see

- Compare multiple sources

- Actively search beyond recommendations

- Understand that visibility is not neutrality

Platforms can:

- Increase transparency

- Explain ranking systems more clearly

- Provide users with more control over feeds

Governments can:

- Regulate transparency

- Protect free access to information

- Avoid overreach that leads to censorship

But none of this is easy.

Because the core driver — attention and profit — is still the dominant force.

—The Future of Information Control

The next phase of the internet will not be less controlled.

It will likely be more sophisticated.

More personalized.

More predictive.

And more invisible.

Artificial intelligence will accelerate this.

Instead of choosing from content, users will increasingly receive synthesized answers.

This reduces friction.

But it also reduces diversity of perspective.

The question is no longer:

“Is information available?”

The question is:

“Who decides what version of information reaches you?”

—Final Thought: You Are Not Seeing the Internet

The internet feels infinite.

But what you see is not infinite.

It is curated.

Structured.

Ranked.

Filtered.

Designed.

Every click, every scroll, every recommendation is part of a system.

And that system is shaping your perception of reality.

You are not just using the internet.

The internet is shaping how you think, what you believe, and what you consider to be true.

The most important question is not whether these systems exist.

They do.

The question is whether you are aware of them.

Because if you are not,

you are not just observing the system.

You are inside it.

Submit your story here

If you have evidence of censorship, deplatforming, blocked access, or media suppression, send your article, documentation, or video-based story for review. Submit Your Story